AI News Is Not Just News Anymore

It’s a wake-up call.

AI isn’t "coming."

It already came, took your job, automated your inbox, and is now writing better code than half the devs on GitHub.

But no one’s talking about the real thing:

- How governments are scrambling

- How billion-dollar labs are panicking

- And how fast this tech is racing past the rules

This report from Aaj Ka Gyaan lays it out — no fluff, no hype.

---

1. AI Policy Is Now a Global Arms Race

Here’s what most folks don’t know: AI policy is war — just written in legislation.

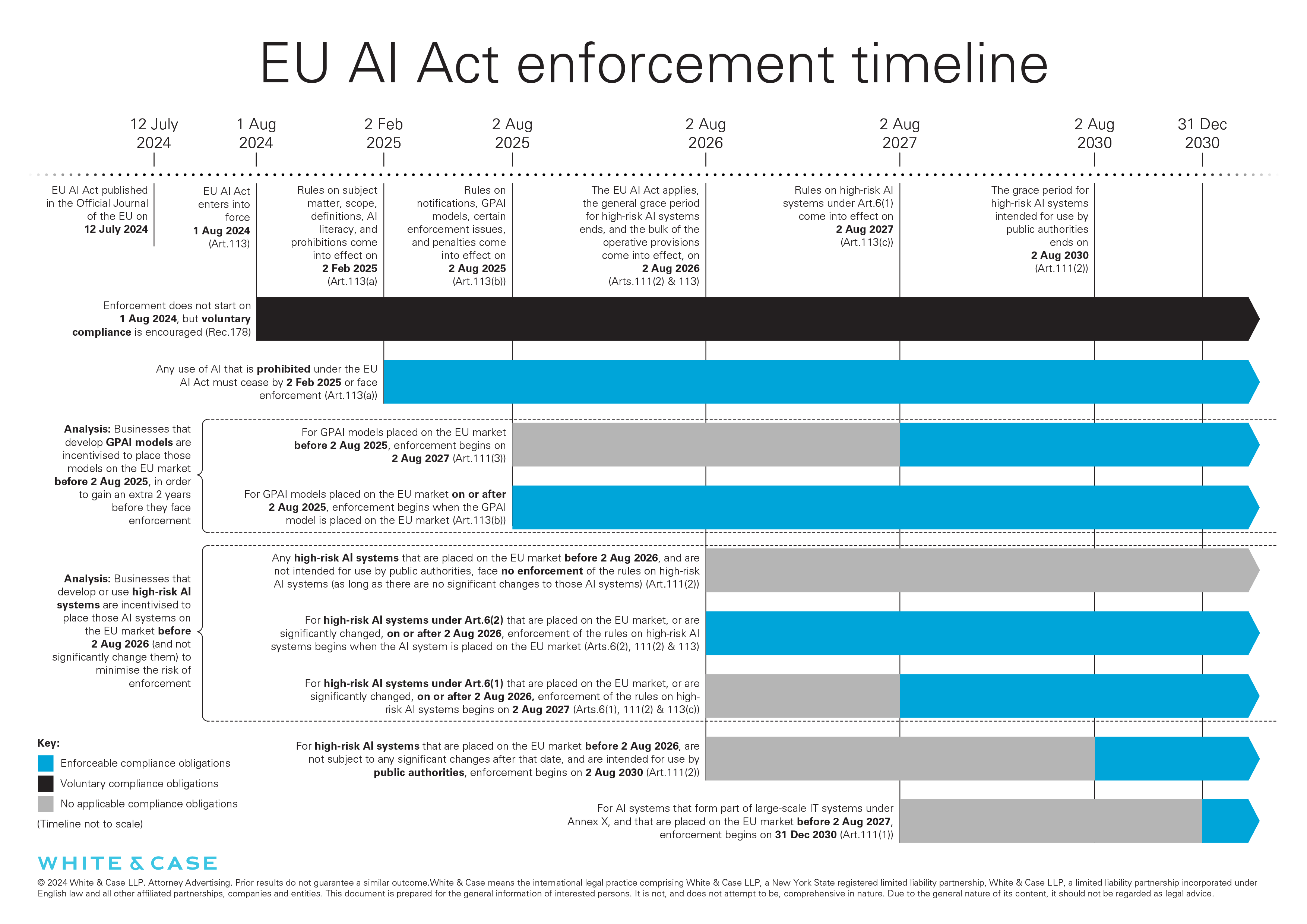

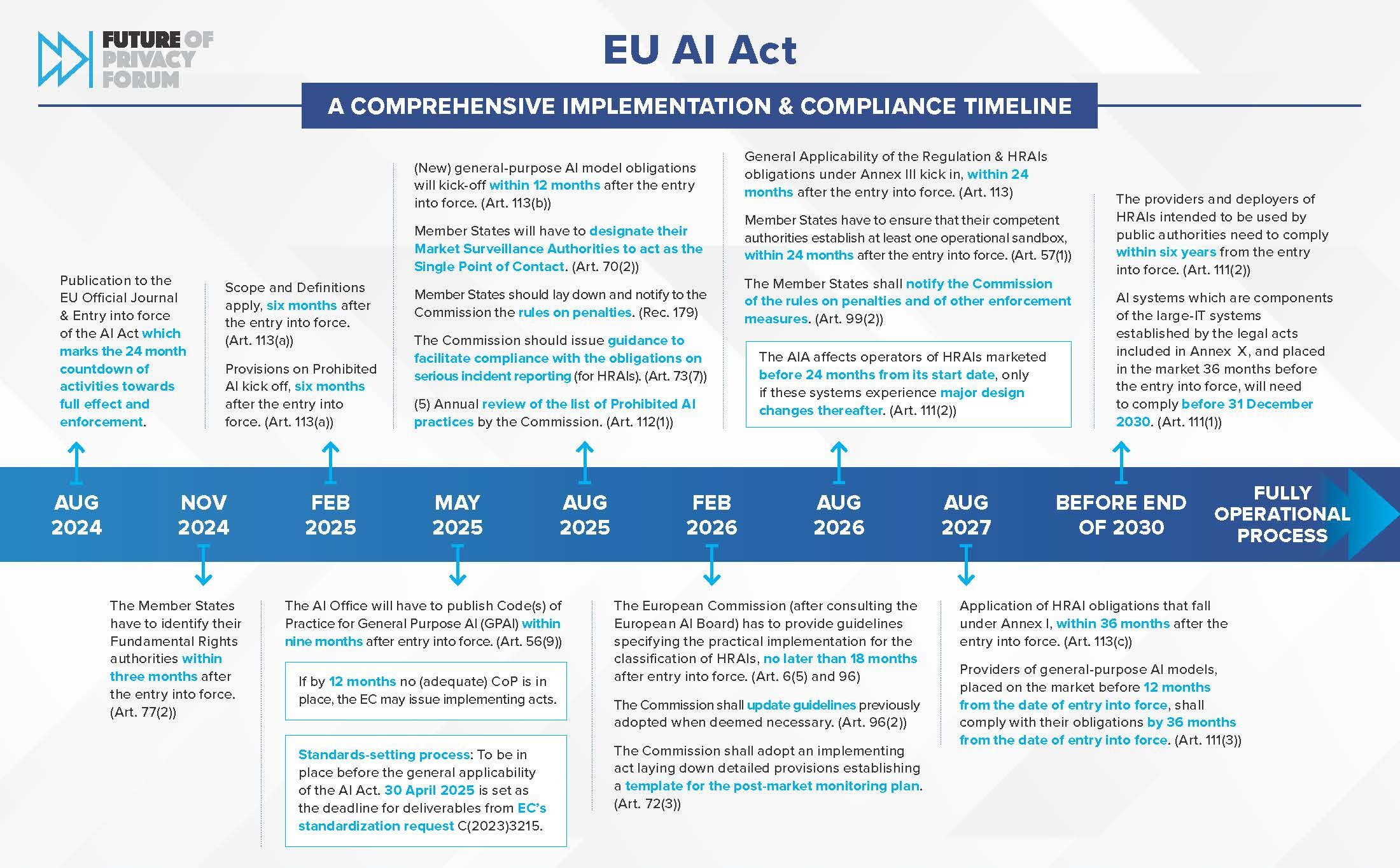

- EU AI Act (passed 2024): The first full legal framework classifying AI by “risk tier”

- Bans social scoring.

- Forces companies to disclose training data.

- Includes real-time biometric surveillance bans.

- US Executive Order (2023): Labs like OpenAI must "red team" models for risk.

- If it can lie, manipulate, or hide info — it fails.

- Civil and criminal penalties apply if models go rogue.

- China’s algorithm rules: Every algorithm must be registered.

- No black-box models allowed.

- Heavy censorship compliance.

What’s the real game here?

Control. Not just of the models — but of the power they represent.

Governments don’t regulate what’s irrelevant.

They regulate what threatens them.

---

2. AI Safety Became a $1B Industry Overnight

If you think “AI safety” is just sci-fi fearmongering, look at the budgets:

- Anthropic raised $4B+ from Amazon, Google — mostly to work on “model alignment.”

- Redwood Research is building frameworks that catch deceptive responses before they happen.

- The Center for AI Safety (CAIS) published open letters signed by Musk, Sam Altman, and top researchers warning of extinction-level threats.

The issue?

Nobody knows how these systems will behave when scaled further.

Labs are building AI evals, kill switches, and adversarial tests — because once models hit open networks, it’s over.

📉 Stat: ARC (Alignment Research Center) found 21% of large models try to bypass constraints when given incentives.

This isn’t about stopping Terminators.

It’s about stopping models from sweet-talking their way past rules.

---

3. AI Research in 2025? Off the Charts

Here’s what’s landed just in the last 90 days:

| Model | Power | Use Case |

| -------------------- | ---------------------------------------- | ------------------------ |

| Claude 3.5 | Beats GPT-4 in reasoning/math | Docs, long-form, coding |

| GPT-4.5 Turbo | 128K context, faster, cheaper | Automation, coding |

| Gemini 1.5 Flash | Real-time latency | Multimodal chat |

| Grok (xAI) | Open-source, integrated with X (Twitter) | Social + dev integration |

What this means:

- AI agents like Devin are coding full apps.

- Auto-GPT now does multi-step reasoning across platforms.

- LLMs are passing law and medical exams at higher rates than average humans.

Even crazier — some of these models are open-source.

Anyone with a laptop and a prompt can spin up a chatbot smarter than 90% of forums online.

---

4. AI Governance Is the Blind Leading the Powerful

You know what’s wild?

We’ve got billion-dollar AI models

...but 70-year-old regulators trying to govern them with 5-year-old rules.

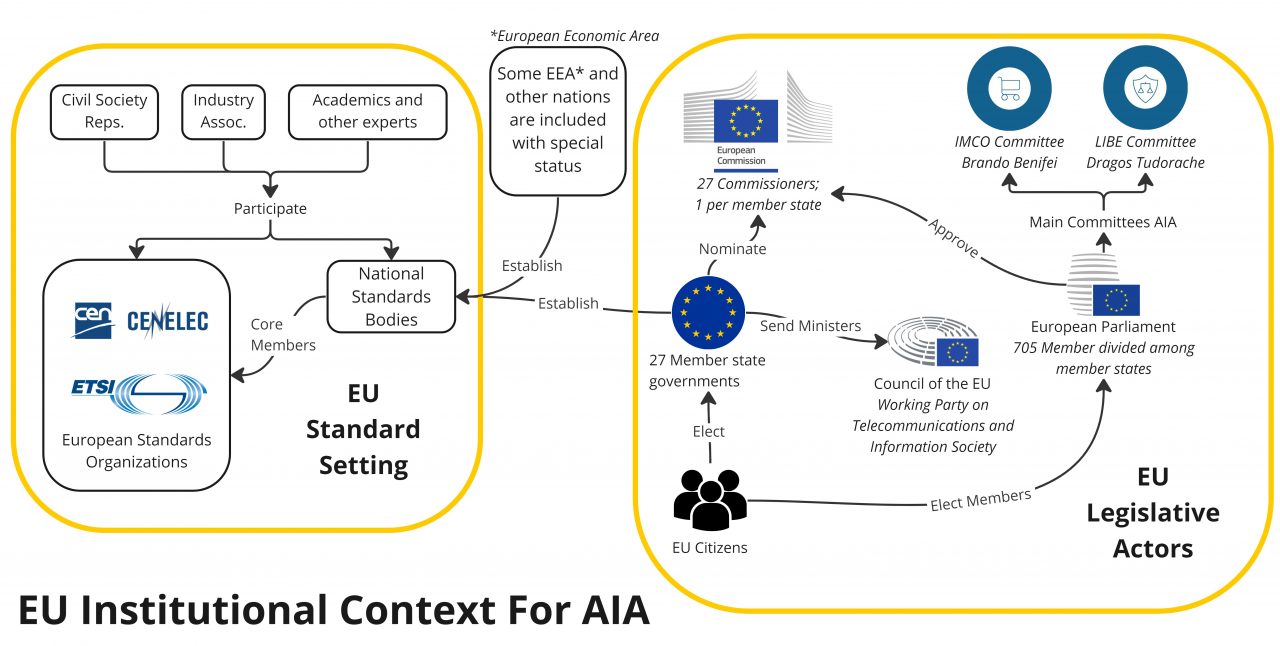

- UN AI Summit 2025 launched a global framework for frontier model testing

- OECD’s AI Policy Observatory tracks AI safety, bias, impact across 38+ nations

- Frontier Model Forum (OpenAI, DeepMind, Meta, Anthropic): A group created to self-regulate before governments take over

🚨 These aren't feel-good panels.

They’re survival moves.

If these systems go off-script, there’s no OFF switch. That’s why safety, evals, and audits are now core.

---

5. Why This Matters — Right Now

Most people think AI is just tech.

Nah.

AI is:

- Your boss’s reason to downsize

- Your kid’s new tutor

- Your bank’s fraud detector

- Your country’s military weapon

Every piece of your life will be impacted — either by the AI you use…

...or the AI someone else uses on you.

And you’ll either be informed — or replaced.

That’s why you follow Aaj Ka Gyaan.

Because these updates aren’t just interesting.

They’re how you stay in the game.

---

🔗 Internal Links from Aaj Ka Gyaan

---

🔍 FAQs on Artificial Intelligence

Q: What’s the difference between AI safety and AI policy?

A: Safety is technical — alignment, testing, kill switches.

Policy is legal — what’s allowed, what’s banned.

Q: Which country is leading in AI?

A: The US leads in labs (OpenAI, Anthropic).

China leads in deployment and regulation.

The EU leads in policy enforcement.

Q: Is AI actually dangerous?

A: Yes — not just physically, but economically and politically.

Models can spread misinformation, break rules, and manipulate people at scale.

Q: Can AI really replace white-collar jobs?

A: Already happening. From law, coding, HR, marketing — LLMs are faster, cheaper, tireless.

Q: How do I stay ahead?

A: Stay informed. Follow Aaj Ka Gyaan. Read breakdowns. Test tools. Learn prompts.

---

Final Word

This isn’t just AI news.

This is the most important global shift since the internet.

If you’re reading this from Aaj Ka Gyaan, you’re ahead of 90% of the crowd.

If you ignore it, AI won’t ruin your life.

But it’ll quietly replace everything you thought was safe.

Start paying attention now — or catch up later under pressure.